Out of the box, local LLMs are really dumb. They simply answer some prompts. No internet. No memory. No tools. Just a model answering from whatever was already inside its weights.

I was curious to see what these models can do and why are they so stupid, so since a month or two I’ve been building my own little Frankenstein local-LLM, ChatGPT-codex-style VS Code plugin, named Ducky.

I taught Ducky to talk to whatever model, llama-server is locally dj-ing via an OpenAI-compatible API, and gave it direct website fetching and Playwright, so it can browse real pages instead of pretending to know what is online.

I added named sessions, so conversations survive across runs.

Then I built browser-action traces, screenshots, planner prompts (BIG THANKS to the Claude leak!), raw model output, and failure diagnostics so I can actually debug what the agent is doing.

Then I sat and thought about some real problem to solve.

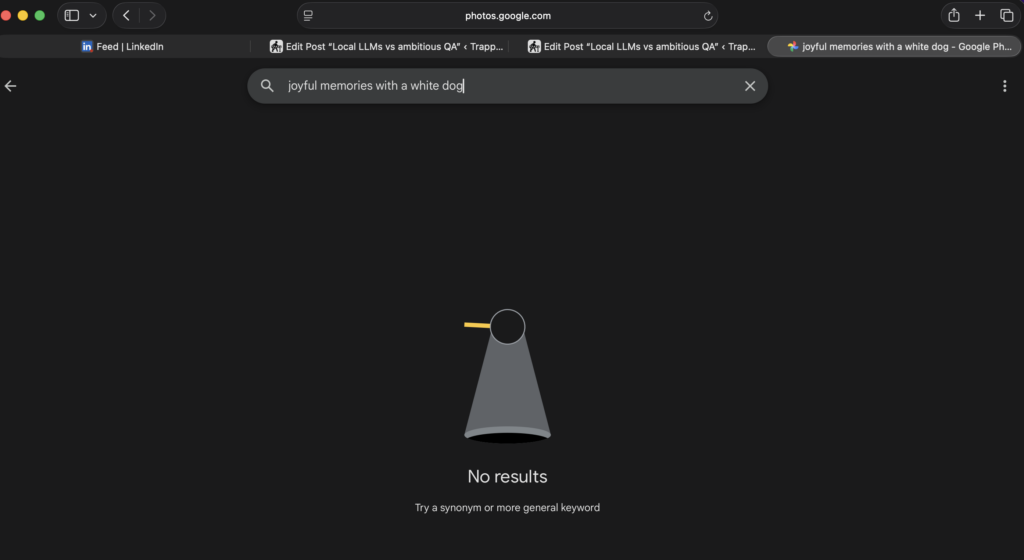

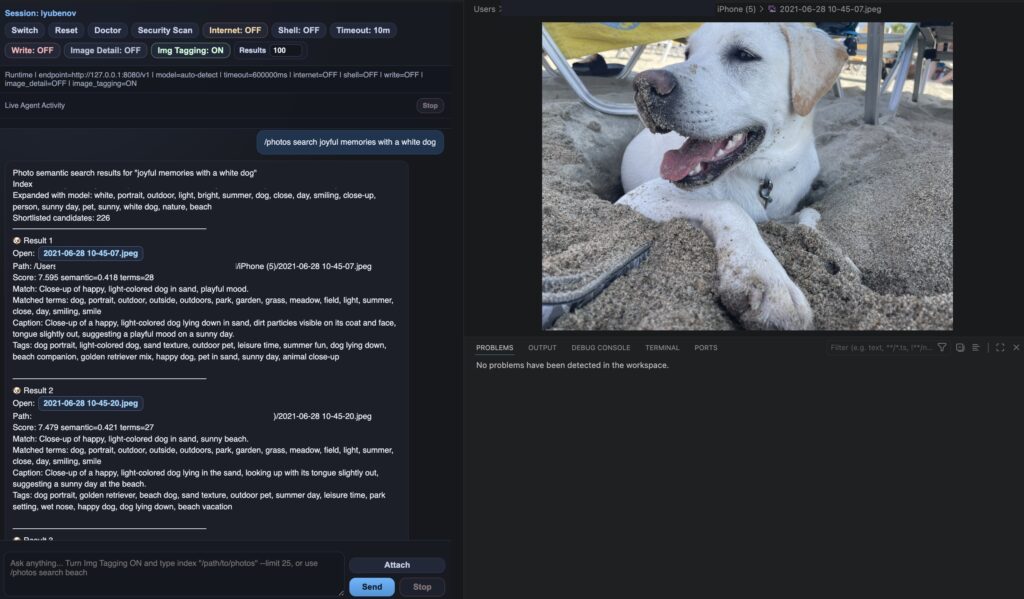

As a photographer, the biggest problem I have is organising photos – only my phone photos backup folder is above 28000 photos that I will never, ever organise manually or most probably will never search thru photo by photo. So I decided to use the vision of the local model to tag my photos – basically, I ambitiously wanted to see if the best feature of Google Photos can be tossed away. So the plan was to give Ducky a command, it then scan your cloud or local folder and start tag all photos in the directory producing json files and indexes.

Did it work?

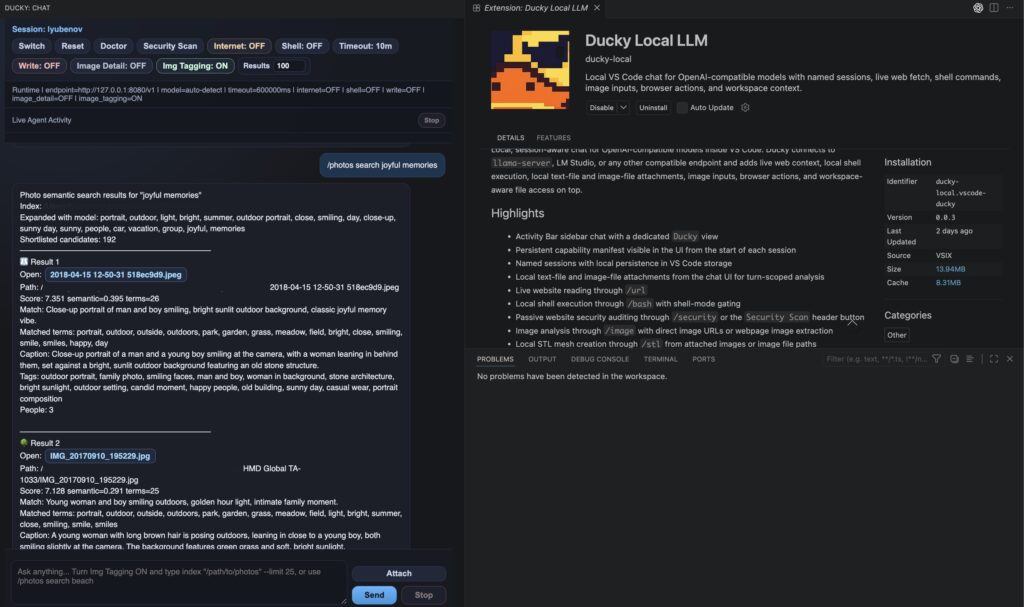

Initially just like google photos, it was able to tag basic things like people, cats and dogs. But I plot twisted that into another pass so now, each search goes through further prompt processing of the index against the local LLM again, so it can connect the dots and interpret what can pass as a “joyful memories with a white dog” based of all of the detailed descriptions of the photos resulting in a rank sorted search result. And just like that – a service of Google themselves is now outclassed by some random NPC with some spare time, ironically with the help of googles own local llm (Gemma4).

The interesting bit is that the model itself did not get smarter. On my pale Linux tagging box – it runs a 4b Gemma 4. What is amusing in this case, is that a smarter frontier model like ChatGPT, pushed the local model to its limits with hundreds of optimisations via the “backend” client to make it smarter and actually useful for the specific job.

Local models are not useless anymore, they are awesome and scary, I would argue that the biggest mistake big tech did, during execution of the AI bubble is letting them slip outside of the big players hands. So now a QA photographer is able to build a Frankenstein when bored. Don’t believe me? Hell, just look what the UI had became! 🤣

Seriously, if someone had told me that in 3 years since the first ChatGPT, I would be able to make real software in a startup style, that could actually solve all kinds of computer problems, just for fun – I would have LOL’d my ass off.

Leave a Reply